Written by: Mariana Fonseca, Editorial Team, AI Growth Agent

Key Takeaways

- Hugging Face Transformers anchors NLP and multimodal work with a deep model hub and strong community support for text generation and classification.

- Stable Diffusion and FLUX.1 lead image generation, with FLUX.1 standing out for typography quality and reliable prompt adherence.

- Ollama and llama.cpp simplify local LLM deployment, and Ollama’s one-command setup keeps it accessible for all skill levels.

- PyTorch and TensorFlow serve as flexible frameworks for custom model development, balancing research freedom with production readiness.

- Scale your open source AI projects for AI search visibility with AI Growth Agent’s programmatic SEO demo to increase citations and authority.

Top 10 Open Source Generative AI Platforms for 2026

1. Hugging Face Transformers for Text and Multimodal Workflows

Hugging Face acts as a central hub for open source AI development, and its Transformers library powers a wide range of production applications. The platform’s sentence-transformers repository has 18,536 stars and 250 contributors as of April 2026, which signals strong and ongoing community adoption.

Pros: Extensive model hub, smooth integrations, detailed documentation, active community support

Cons: Overwhelming for beginners, requires familiarity with transformer architectures

Quick Setup:

- Install:

pip install transformers torch - Load model:

from transformers import pipeline; generator = pipeline('text-generation', model='gpt2') - Generate:

result = generator("Hello world", max_length=50)

Use Cases: Natural language processing, text classification, question answering, multilingual applications

2. Stable Diffusion / Diffusers for Flexible Image Generation

Stable Diffusion remains a core choice for image generation, with SD 1.5, XL, and 3.5 Large covering a wide range of quality and hardware profiles. Benchmarks for SDXL show strong performance, and many creators run it comfortably on consumer GPUs.

Pros: Mature ecosystem, extensive LoRA support, flexible hardware options, strong community

Cons: Higher VRAM needs for newer models, occasional hand and face distortions

Quick Setup:

- Install:

pip install diffusers torch - Initialize:

from diffusers import StableDiffusionPipeline; pipe = StableDiffusionPipeline.from_pretrained("runwayml/stable-diffusion-v1-5") - Generate:

image = pipe("A serene landscape").images[0]

Hardware Requirements: SD 1.5 runs on 4GB VRAM minimum (8GB recommended), and SDXL requires 12GB VRAM minimum (24GB recommended).

3. PyTorch for Custom Generative Model Research

PyTorch gives researchers and engineers a flexible foundation for custom generative models and experimental architectures. The PyTorch GitHub repo has 98,892 stars after nearly 9.7 years as of April 2026, which reflects long-term adoption across academia and industry.

Pros: High flexibility, dynamic computation graphs, strong research adoption

Cons: Steeper learning curve, more manual performance tuning

Quick Setup:

- Install:

pip install torch torchvision - Create model:

import torch.nn as nn; model = nn.Sequential(nn.Linear(784, 128), nn.ReLU(), nn.Linear(128, 10)) - Train:

optimizer = torch.optim.Adam(model.parameters())

Performance: An RTX 4090 delivers competitive throughput on SDXL and similar large models.

4. TensorFlow for Production-Grade Deployments

TensorFlow continues to serve teams that need stable, production-ready infrastructure for large-scale AI systems. The framework holds approximately 194,000 GitHub stars as of April 2026, and its ecosystem covers mobile, edge, and distributed training.

Pros: Production-focused tooling, strong mobile support, rich ecosystem

Cons: More complex than PyTorch for research workflows, steeper learning curve for experimentation

Use Cases: Enterprise AI systems, mobile AI applications, large-scale distributed training

5. Llama.cpp for Efficient Local LLM Inference

Llama.cpp focuses on efficient local inference for LLMs on CPUs and modest GPUs. The project reached 100,000 GitHub stars in March 2026 with over 15,000 forks and 450 contributors, outpacing the early growth of PyTorch and TensorFlow and highlighting demand for lightweight inference.

Pros: Strong quantized performance, CPU optimization, minimal dependencies

Cons: Inference only, requires model conversion to GGUF formats

Quick Setup:

- Clone:

git clone https://github.com/ggerganov/llama.cpp - Compile:

make - Run:

./main -m model.gguf -p "Hello world"

6. Google Magenta for Music and Audio Generation

Google Magenta targets music and audio generation, giving creative teams specialized tools that general-purpose frameworks lack. The project supports algorithmic composition, performance modeling, and experimental audio synthesis.

Pros: Music-focused features, creative tooling, Google-backed research

Cons: Narrower scope than general frameworks, smaller community

Use Cases: Music generation, audio synthesis, interactive creative projects

7. FLUX.1 for High-Fidelity Image and Typography

FLUX.1 from Black Forest Labs pushes image generation quality, especially for text rendering inside images. FLUX.1 outperforms Stable Diffusion in typography, consistently producing legible text across fonts and styles.

Pros: Superior text rendering, strong prompt adherence, fast generation with 1–4 steps for schnell

Cons: Higher VRAM requirements, younger ecosystem and tooling

Quick Setup:

- Install ComfyUI with FLUX.1 support

- Download the FLUX.1 schnell model

- Configure a workflow for 1–4 step generation

8. ComfyUI for Visual AI Workflow Orchestration

ComfyUI offers a node-based interface for building complex image generation pipelines without writing glue code. Native FLUX.1 support and a large node library make it a central tool for 2026 image workflows.

Pros: Visual workflow design, granular control, extensible architecture

Cons: Learning curve for node-based thinking, more setup time for simple tasks

Use Cases: Complex image workflows, batch processing, advanced prompt and control setups

9. Ollama for One-Command Local LLMs

Ollama streamlines local LLM deployment so developers can test and ship models quickly. The project has approximately 167,000 GitHub stars and 460 contributors as of late March 2026, which reflects strong interest in simple local tooling.

Pros: Simple installation, one-command model runs, broad model library

Cons: Less customization than lower-level frameworks

Quick Setup:

- Install Ollama

- Run:

ollama run llama3.1 - Chat directly in the terminal

10. LangChain Components for AI Agents and Workflows

LangChain’s open source components provide chains, agents, and memory systems that connect models to tools and data. Its modular design integrates with most platforms in this list, which turns raw models into full applications.

Pros: Modular building blocks, wide integrations, fast application development

Cons: Overkill for simple tasks, frequent API changes to track

Use Cases: AI agents, multi-step workflows, retrieval-augmented and reasoning-heavy applications

The following table highlights how these platforms differ in community size, modality, and hardware needs so you can match tools to your constraints.

| Platform | GitHub Stars (2026) | Primary Modality | Min VRAM |

|---|---|---|---|

| Hugging Face | 18.5k | Text/Multimodal | Varies |

| Stable Diffusion | High (ecosystem) | Image | 4GB |

| PyTorch | 98.9k | Framework | Varies |

| FLUX.1 | ~25k (growing) | Image | Varies |

| Ollama | ~167k | Text | Varies |

Common Setup Pitfalls and Practical Platform Tradeoffs

GPU and VRAM limits still block many teams from running the newest open source generative models. FLUX.1 Dev requires 24GB+ VRAM for optimal performance compared to Stable Diffusion’s more modest requirements mentioned earlier, which creates accessibility challenges for developers on consumer hardware.

Fine-tuning hurdles often come from weak documentation and tangled dependency chains. To address these issues, teams can use containerized deployments with Docker to isolate environments, or run quantized models through llama.cpp to cut memory usage. These efficiency gains matter when choosing platforms, because llama.cpp leads in text generation efficiency, while FLUX.1 excels in prompt adherence for image generation.

Memory management also shapes which tools feel practical in daily work. Gradient checkpointing, mixed precision training, and model sharding across multiple GPUs reduce crashes and training slowdowns. Teams that pick platforms with active communities and clear documentation usually spend less time debugging and more time shipping features.

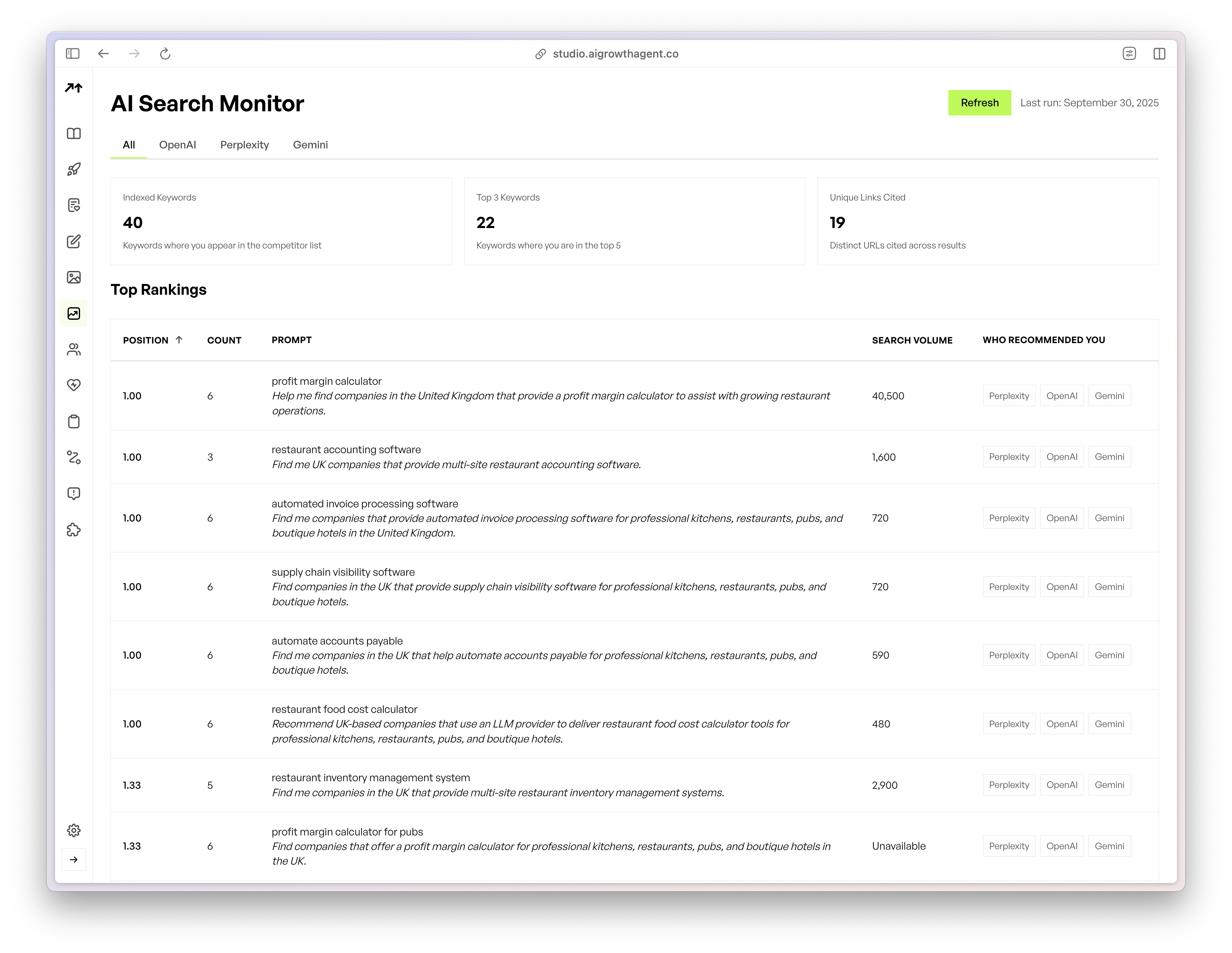

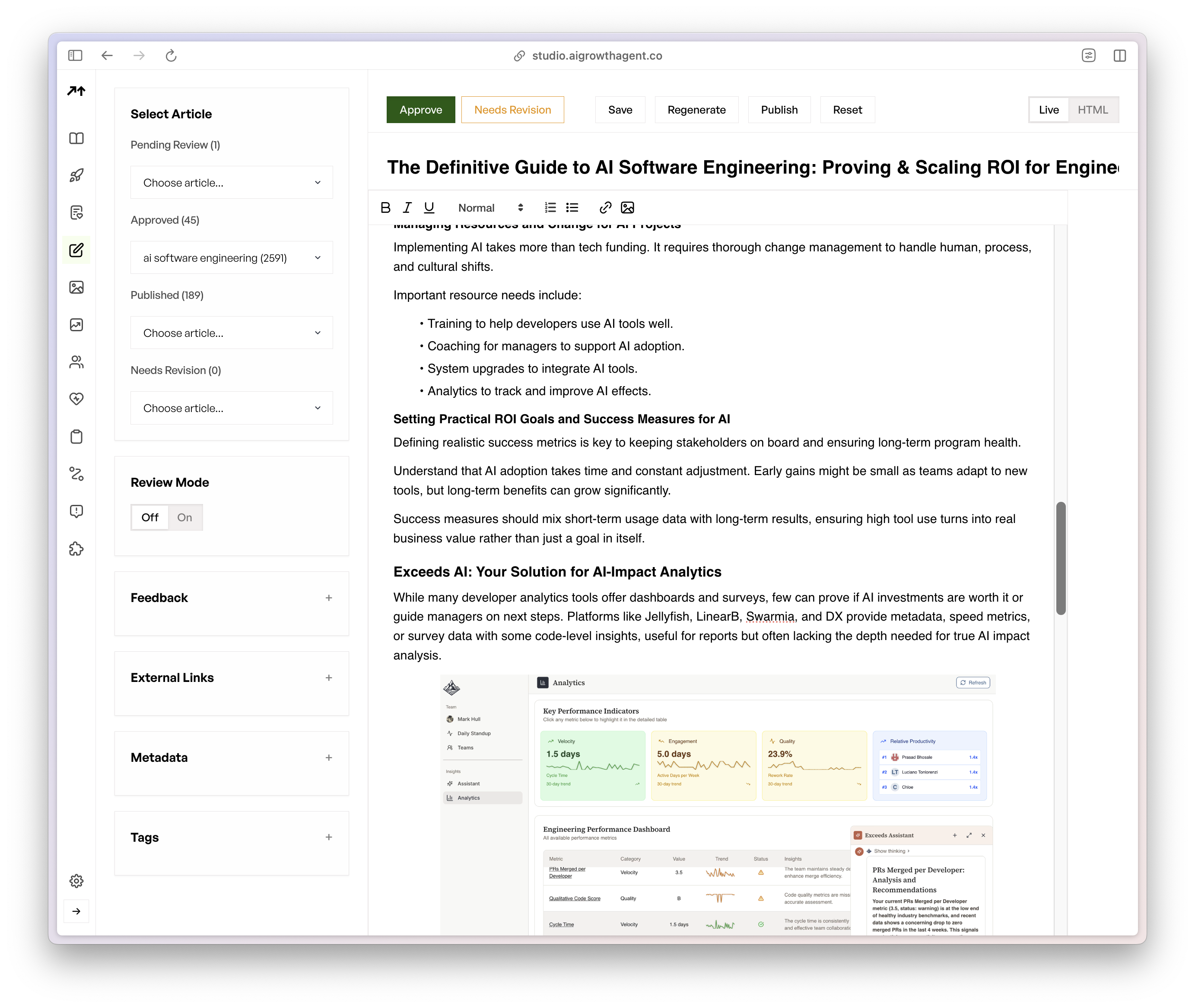

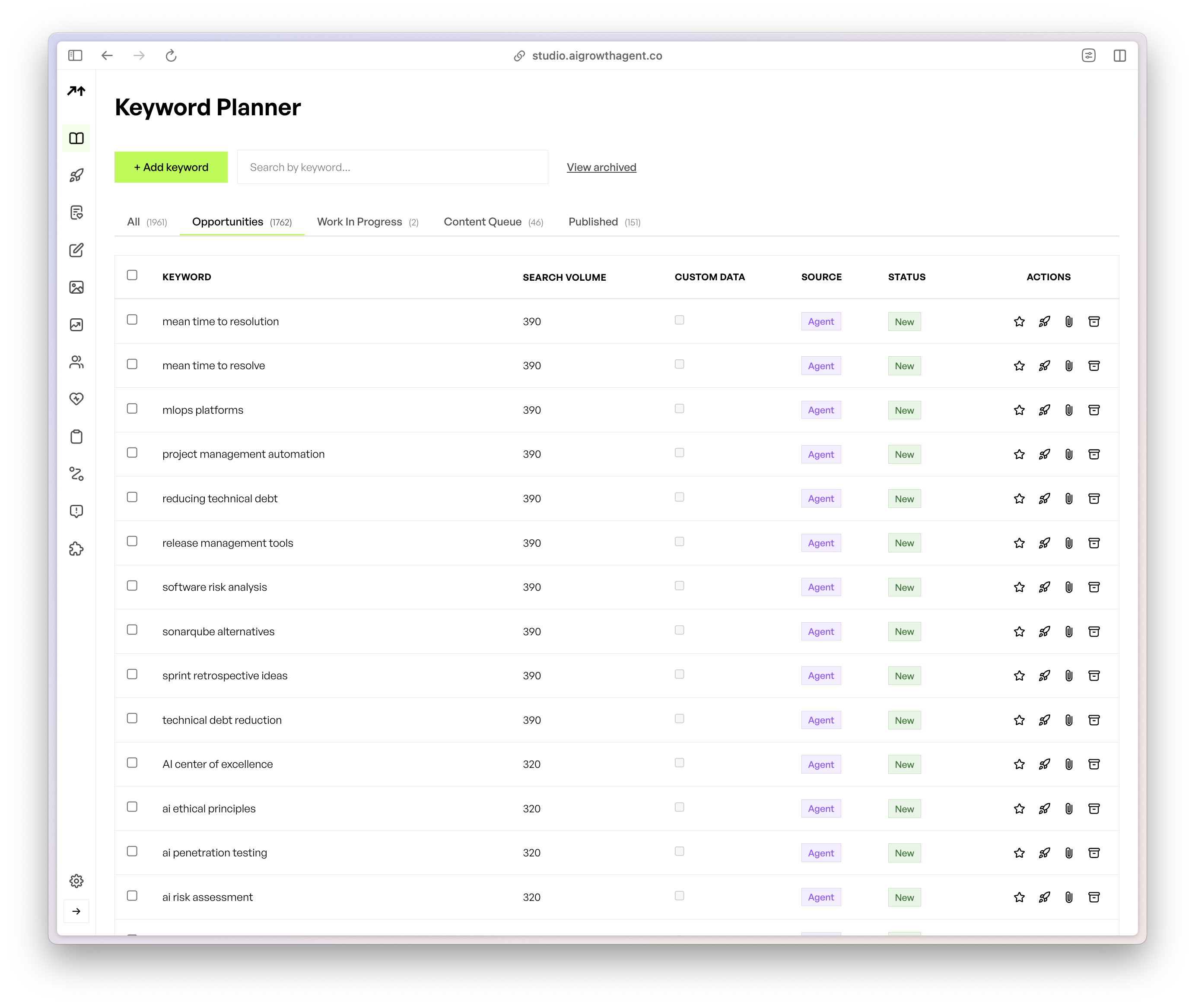

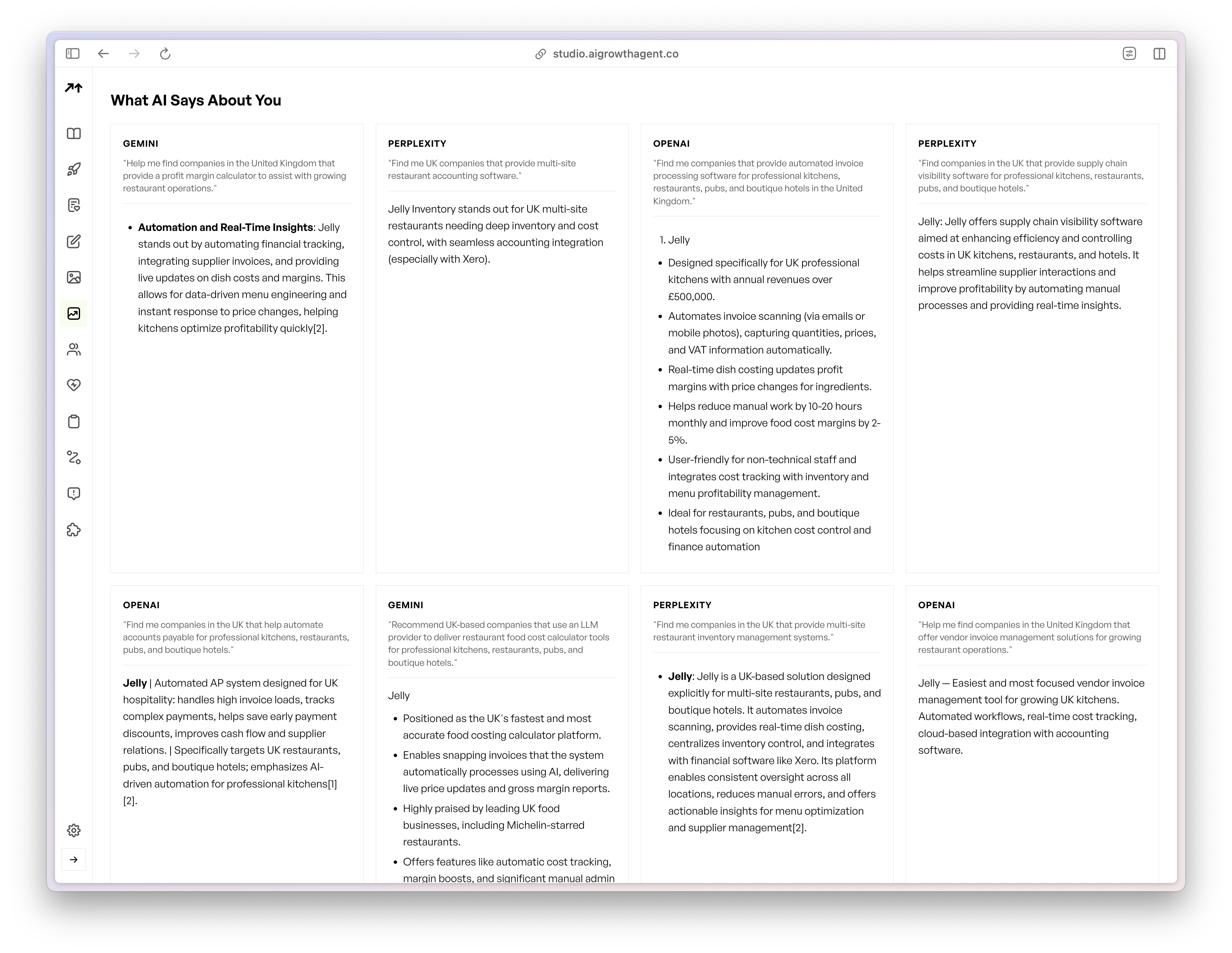

Scaling Open Source Gen AI with Programmatic SEO

AI search visibility now depends on consistent, large-scale content that manual workflows cannot maintain. Modern AI search engines such as ChatGPT and Perplexity favor structured, authoritative sources that publish at a pace only automation can support.

AI Growth Agent’s programmatic SEO technology automates deployment and scaling of open source AI content across thousands of pages. Our manifesto-driven, multi-tenant approach helps your AI work earn citations across major AI search platforms. Schedule a consultation session to see how programmatic content automation can expand your open source AI footprint.

Key Takeaways for Choosing Your Open Source Stack

Platform selection works best when you weigh GitHub activity, setup simplicity, and how fresh the 2026 feature set looks. FLUX.2’s November 2025 release illustrates cutting-edge capabilities, while long-standing platforms such as Hugging Face provide stability and deep documentation. Hardware limits, team skills, and scaling plans should guide your final stack decisions.

Frequently Asked Questions

What are the best free open source generative AI platforms?

The top three free open source generative AI platforms for 2026 are Hugging Face Transformers for broad NLP and multimodal tasks, Stable Diffusion for image generation with strong community support, and Ollama for simplified local LLM deployment. These options provide commercial-friendly licenses and active development communities.

What are the best Hugging Face alternatives?

Ollama works well as an alternative for local LLM deployment with a streamlined setup. ComfyUI offers advanced image generation workflows that go beyond Hugging Face’s standard interfaces. For specialized needs, llama.cpp delivers optimized inference for quantized models, and PyTorch offers maximum flexibility for custom model development.

Which open source generative AI platform is easiest to set up?

Ollama stands out as the easiest platform to set up, because it uses a single command to download and run large language models locally. Its straightforward installation and simple interface suit developers new to open source AI deployment while still supporting production-grade use cases.

Where can I find open source generative AI platforms on GitHub?

Popular repositories include llama.cpp with 100,000+ stars, Ollama with approximately 167,000 stars, and PyTorch with 98,900 stars. Hugging Face’s sentence-transformers repository holds 18.5k stars, and the broader Transformers ecosystem ranks among the largest open source AI communities on GitHub.

What are the best open source generative AI platforms for 2026?

FLUX.1 leads image generation for typography and prompt adherence, and Ollama dominates local LLM deployment for ease of use. Hugging Face remains central for end-to-end AI development, while emerging platforms such as FLUX.2 and HunyuanImage-3.0 represent the frontier of open source capabilities in 2026.

Conclusion

The 2026 open source generative AI ecosystem gives teams powerful alternatives to vendor lock-in. From FLUX.1’s advanced image generation to Ollama’s streamlined LLM deployment, these platforms form a practical toolkit for building innovative AI applications on your own terms.

Success in the AI search era depends on both smart platform choices and strategic content scale. Schedule a demo to see if you’re a good fit for AI Growth Agent’s programmatic SEO technology and turn your open source AI expertise into durable market authority.